In our interview series, we meet with data privacy leaders from various industries to learn about their experiences, projects, and perspectives.

Do you have an interesting perspective you’d like to share? Contact us at blog(at)didomi(dot)io.

Will Shepherd is a Principal Solution Consultant at Civic Data, an Australian consultancy that helps organizations maximize the value of their digital marketing and analytics investments while ensuring privacy is built in from the start. Bridging the worlds of MarTech and compliance, Civic Data helps businesses design data ecosystems that are effective and ethical.

We sat down with Will to talk about what privacy by design looks like in practice, the evolving state of data privacy in Australia, what global teams can learn from its regulatory shift, and why ideas like server-side consent and consent lineage are becoming essential for modern data management.

Rethinking data collection: From more data to better purpose

Didomi: Can you start by introducing yourself and what you do at Civic Data?

Will: I’m Will, a Principal Consultant at Civic Data, based in Melbourne Australia. The broader team is mostly in Sydney, with colleagues in Singapore and Perth as well.

My role is focused on helping organisations understand how data is being collected and used in practice across their digital environments, and then working with them to bring that into line with privacy requirements in a way that still supports their marketing and analytics goals.

In practice, that usually starts with diagnosing how data is being collected, shared, and used across websites, apps, and marketing platforms, and then helping teams redesign those approaches so they’re compliant, fit for purpose, and still getting the most out of the technologies they’ve invested in.

A big part of the role is also connecting the dots across teams (marketing, technology, and legal) and helping them align on what’s going on, where the risks sit, and what needs to change.

You spent several years working in the martech world before specializing in privacy. How did that shift happen?

Before Civic Data, I spent about eight or nine years working in digital martech and adtech, and earlier on the client side in financial institutions here in Australia.

At the time, it really felt like the mindset was “collect as much data as possible and figure out how to use it later.” That just seemed to be how most platforms and teams operated.

When I moved to Civic Data in 2022, GDPR was already well established, and Australian regulation was starting to gain momentum. It was pretty clear that a lot was about to change in how organisations approached data.

What I found interesting was being able to take that existing technical and platform experience and apply it through a privacy lens, not just understanding how things worked, but what they meant from a compliance and data handling perspective.

So the shift was really about starting to ask more fundamental questions: why are we collecting this data, what is it being used for, have we actually disclosed it properly, and do we even need it in the first place?

That’s the part of the work I still find most interesting, and challenging, especially as both the technologies and the regulations keep evolving.

What’s it been like to see that change from the inside?

It’s been interesting because the change hasn’t been linear.

On one hand, there’s definitely more awareness. Privacy is now part of the conversation in a way it wasn’t before, and most organisations recognise it’s something they need to take seriously.

But there’s still a gap between that awareness and what’s actually happening across digital environments.

What we often see is that the intention is there. Policies are updated, banners are often implemented. But when you look more closely at how things are configured or how data is flowing, it doesn’t always line up.

So from the inside, it feels less like a clean shift and more like an ongoing transition. The tools are improving, expectations are clearer, but there’s still a lot of work in bridging that gap. That’s usually where the risk sits.

Operationalizing privacy: How Civic Data turns privacy principles into practice

When a company first comes to Civic Data, what does your process look like?

Our first engagement with most clients is what we call a Digital Marketing Privacy Assessment.

That’s where we take a detailed look at what’s happening across their digital touchpoints, including websites, mobile apps, and anything a customer may engage with digitally.

We focus on what we call the customer experience layer. That is, what a user would actually experience, or what can be technically observed from the outside. That’s intentional, because it reflects the higher-risk areas where regulators, and more technically aware users, assess digital environments in practice if they know where to look.

In terms of what that involves, we review things like how personal information is being collected, how consent is implemented, what technologies and vendors are in play, and how all of that lines up with what’s disclosed in privacy policies.

While tools like cookie scanners and AI-driven policy reviews can surface signals, our approach goes a step further by connecting the dots and providing context around why data is being collected, how it aligns with disclosure, and how different technologies are working together in practice.

The output is typically a structured set of findings and prioritised recommendations that are hopefully meaningful and actionable, with clear context around why they matter and what to focus on next.

What types of issues or compliance gaps does the audit typically uncover?

There’s usually a pretty broad mix, but a few common themes come up.

One is unintentional data sharing, things like features being switched on that collect or send different types of personal or sensitive information without the business fully realising.

We also see gaps in consent pretty often. For example, where tracking is happening for things like advertising or analytics, but there isn’t a clear or effective way for users to opt out.

Another big one is the disconnect between what’s written in privacy policies and what’s actually happening on the site.

And then there’s undocumented third-party data sharing, where data is flowing to vendors the business isn’t fully aware of, usually through tag piggybacking or default platform settings.

A lot of these issues sit outside what automated tools can really pick up on their own, because you need context to understand what’s actually going on.

It sounds like privacy touches many parts of an organization. How do you help teams navigate that?

It can get pretty messy at times, because different parts of the business aren’t always aligned or aware of what others are doing.

In the context of our assessments, marketing might enable something through an agency to improve targeting, without realising what data is being collected. Tech might build something that unintentionally exposes personal information. And legal is often working from what should be happening, not what’s actually happening.

We often find ourselves sitting in the middle of those conversations (sometimes literally) between marketing, tech, and privacy, helping (once again) connect the dots on what’s being collected, how it’s being used, and how it should be disclosed.

A big part of the role is just getting everyone aligned on reality. Once that’s clear, it becomes much easier to decide what needs to change and how to move forward.

Once those issues are identified, how does the work evolve?

It usually starts quite tactical. Fixing immediate issues, turning off features, updating disclosures, and making sure the right controls are in place.

It then naturally shifts into something more strategic and layered across their broader roadmap, looking at privacy by design, consent management, and data retention in a way that actually supports how the MarTech stack is meant to perform.

What’s often surprising for clients is that the same audit that establishes their regulatory position also highlights inefficiencies, things like redundant tags, unused tools, or data that isn’t really adding value.

So it ends up being more than just a compliance exercise. It becomes a way to streamline the stack, improve measurement, and get more out of the technologies they’ve already invested in.

That’s really where the commercial and regulatory sides start to come together.

Is there a recent project that illustrates that approach?

Our work with CarSales is a good example.

It started with understanding what was happening across their data collection and tracking setup, particularly in the context of cookie deprecation, and where there were gaps or inefficiencies.

From there, we helped validate what those changes meant in practice — not just from a privacy perspective, but in terms of media spend, measurement, and licence costs.

What it showed is that when you get the data foundations right, it doesn’t just support compliance. it improves how everything else performs. Measurement becomes more reliable, media spend is more efficient, and you’re actually getting the value you expect from the platforms you’re investing in.

Australia’s privacy shift: Tranche 2 and the opt-out mandate

Where does Australia stand in terms of its current privacy law?

Tranche 1 went live in December 2024 and significantly expanded the OAIC’s enforcement toolkit, including things like compliance notices and infringement powers without needing to go through the courts.

Tranche 2 is expected to cover areas like AI, tracking technologies, and individual rights, but there’s no confirmed timeline yet. It comes up in pretty much every conversation - people are always asking us when it’s coming, and as far as I’m aware, no one really knows at this stage.

I think where there’s a bit of a gap at the moment is how the market is interpreting that. Some organisations are treating it as a bit of a pause, waiting for Tranche 2 before making more meaningful changes.

In practice, that’s probably not the right way to look at it. The OAIC has been clear that things like pixels and ad tech fall under existing privacy law, so those expectations are already in play.

So it’s less about waiting for what’s coming next, and more about getting comfortable with what already applies today.

Has the Australian regulator provided guidance to businesses in the meantime?

Yes, the OAIC has provided some pretty clear signals, particularly through its guidance on how the Australian Privacy Principles apply in digital environments, including the use of tracking pixels and similar technologies.

A big theme in that guidance is around organisations unintentionally collecting and sharing personal, and in some cases sensitive, information through tracking technologies, often without fully understanding it or disclosing it properly.

At the moment, you don’t necessarily need to force an explicit opt-in (i.e. implied consent can still apply in many cases) but if you’re using cookies or tracking technologies for things like advertising, there’s a growing expectation that users should have a clear and accessible way to opt out.

What the OAIC has really emphasised is that responsibility sits with the organisation deploying the technology. It’s not enough to rely on third-party settings or assume a vendor is handling it correctly, instead you need to understand what’s being collected, how it’s being used, and how that’s communicated to users.

We’ve also seen enforcement and regulatory focus in areas where sensitive information has been collected via tracking technologies without appropriate safeguards or transparency.

So the direction of travel is pretty clear. It’s not just about having something in place, but making sure it actually reflects what’s happening in practice, both from a technical and a user experience perspective.

What is the practical challenge for businesses in implementing this required opt-out mechanism?

The main challenge is really around implementation.

It’s not just about having something in place, it’s about making sure it actually works at a technical level. That means understanding how tracking is set up, how different tools behave, and making sure those systems respond properly when a user opts out.

That’s where things tend to fall down, not the intent, but the execution.

What does ‘easy’ look like in reality?

That’s where it gets a bit tricky.

In practice, it means the opt-out needs to be easy to find and just as easy to use as opting in and not buried in a privacy policy or hidden behind multiple steps.

What we still see quite a lot is businesses linking out to third-party settings, like Google’s ad preferences, which creates a pretty fragmented experience for users.

The expectation is increasingly that if you’re deploying the technology, you should be providing that control directly (usually through something like a CMP) so users can manage their preferences in one place.

And importantly, it needs to actually work in practice. It’s not just about presenting a choice, it’s about making sure that choice is reflected in how tracking behaves.

Is this changing how companies think about marketing?

Definitely. Australia’s always had relatively strong enforcement around one-to-one channels like email and SMS, so businesses are generally quite familiar with those rules.

What’s changing is that cookies, trackers, and even server-side tools are starting to be treated in a similar way. The lines between what is and isn’t considered direct marketing are starting to blur, particularly as these technologies get better at targeting individual users.

It’s not the same as email or SMS, but it’s getting closer and that means the same expectations are increasingly being applied.

And beyond cookies, are there any other areas organizations are preparing for?

Children’s privacy is definitely one area I’ve been taking a bit more of an interest in recently.

It came up in Tranche 1, which is great, but the practical side is still being worked through. Take universities, for example they’re marketing to prospective students who might be under 18, and it raises questions around things like parental consent and how that works in a digital context.

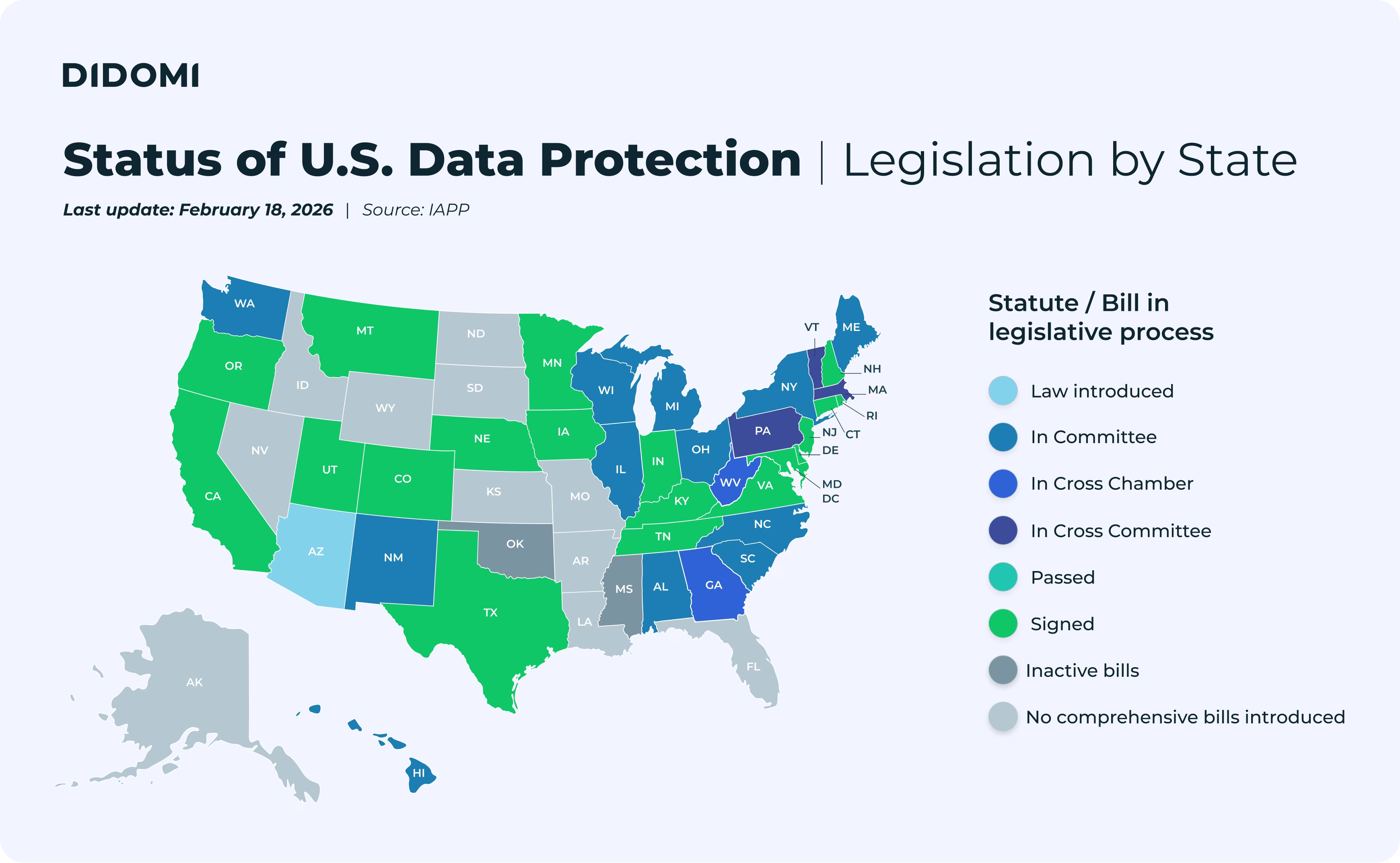

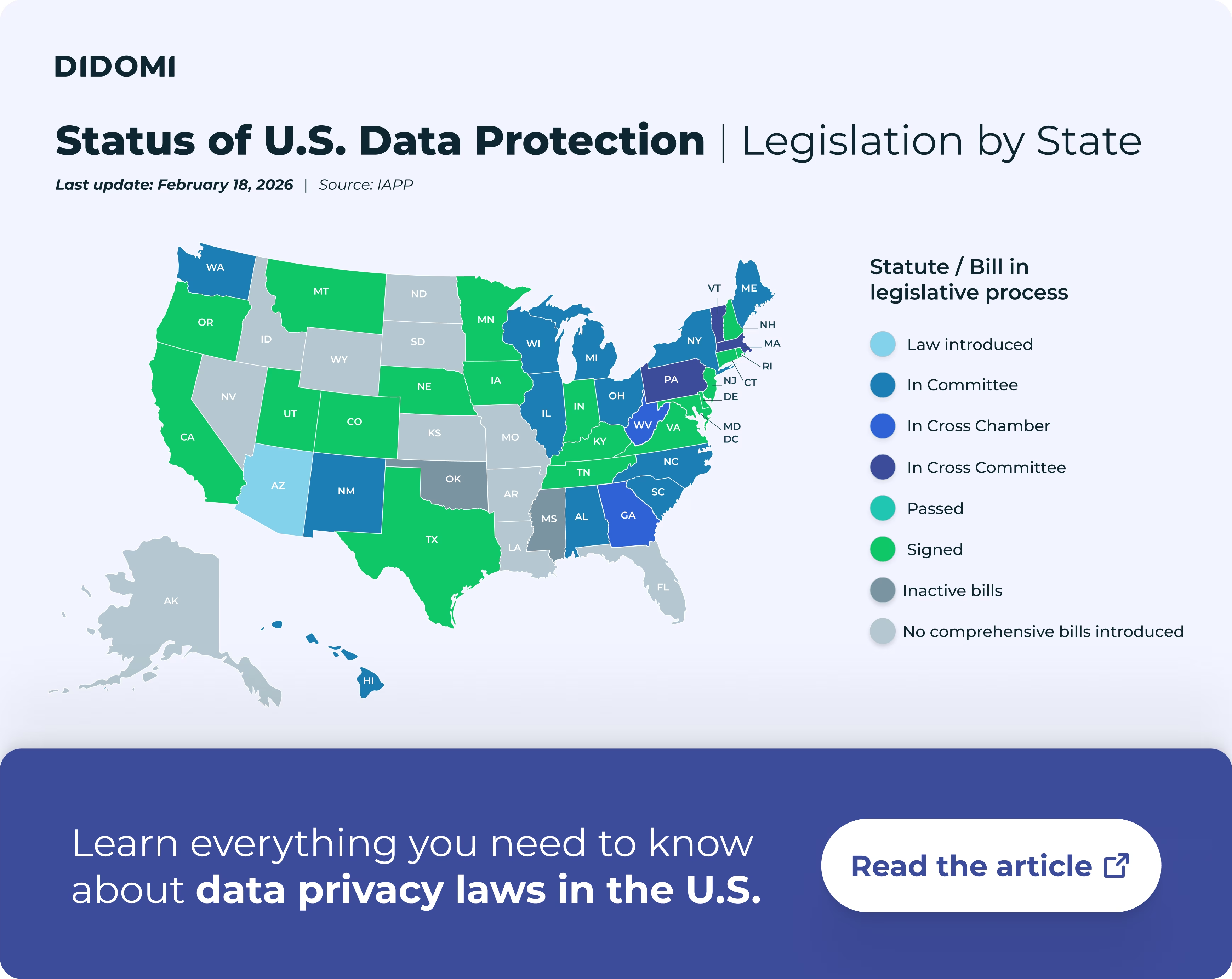

More broadly, we’re seeing organisations increasingly operate across multiple markets, which brings a lot more complexity. It’s no longer just about complying with one set of rules, businesses are having to think about how different privacy frameworks apply across jurisdictions.

Once upon a time, it was fairly common to default to GDPR as the most conservative approach and apply that everywhere.

Now it’s a bit more nuanced. Regulations are evolving and, in some cases, diverging (particularly with models like CCPA/CPRA in the U.S) so it’s less about applying a single standard globally, and more about understanding how those differences actually play out in practice.

The next frontier of privacy: Server-side data and consent lineage

Many people still think of consent in terms of cookie banners and pop-ups. How is that changing with newer, server-side technologies?

It’s definitely shifting. When people think about consent, they usually picture the cookie banner as something that controls whether a tag fires on a website.

But with server-side setups, that visibility starts to drop away. Once data is being processed server-side, you can’t rely on what you see in the browser to understand what’s actually happening.

The core principles don’t change (things like disclosure, consent, and purpose still apply) but they need to be enforced differently. You have to think about how those choices carry through into backend systems and APIs, not just the front-end experience.

That’s where it gets more complex. If you don’t have that layer set up properly, it’s very hard to detect issues through standard scanning or monitoring.

And with more organisations moving towards server-side architectures and CDPs, it’s becoming a much more important part of how consent is managed in practice.

Are technology vendors starting to help with that shift?

Yes, we’re definitely seeing vendors introduce more controls from a privacy perspective.

A lot of that comes through additional configuration options, particularly around data minimisation. For example, platforms like the Meta pixel allow you to limit things like URL capture, or disable features like automatic advanced matching that can pick up personal information.

They’re positive steps, but they also highlight how much responsibility still sits with the business. The tools are there, but they need to be understood and configured properly, otherwise the risk doesn’t really go away.

And what happens when all that data from different systems comes together?

That’s where things start to get more complex.

You’ve got data coming in from all over the place (websites, apps, CRM systems, third-party platforms) and it’s all being pulled together into backend systems like data warehouses or CDPs.

At that point, it’s less about the individual data points, and more about whether you still understand where that data came from and how it was collected.

That’s really where the idea of consent lineage comes in.

Could you elaborate on what consent lineage means in this context?

Consent lineage is really about being able to trace where data came from, and what consent and disclosure was applied at the time of collection.

What we see quite often is that once data is aggregated, that context starts to get lost. You end up with a clean dataset, but not always a clear understanding of what you’re actually allowed to do with it.

Individual platforms are usually pretty good at managing their own piece of the puzzle, but that doesn’t always carry through when everything is combined.

That becomes even more important when that data starts being used in newer use cases (i.e. like feeding into AI models) where there’s often a lot of focus on the output, but less on whether the underlying data was collected and can be used in the right way.

Without that visibility, it becomes very difficult to use that data in a way that’s both compliant and actually useful.

You’ve shared some fascinating insights into how data privacy is evolving. Looking ahead, what excites you most about where this field is heading?

What I find most interesting, and probably what keeps me engaged in this space, is how quickly things are changing.

It never really stands still. New technologies come in, expectations and regulations shift, and you’re constantly having to rethink how privacy actually works in practice.

I enjoy being close to that. Helping businesses make sense of it as it’s happening, rather than trying to apply something static to something that’s always moving.

And like everyone else at the moment, AI is a good example of that. It’s moving quickly, and it’s raising a lot of the same underlying questions around data, consent, and how things should actually work.

—

To learn more about Will and his work, follow him on LinkedIn and visit civicdata.com.au.

.svg)